I don't care about your database benchmarks (and neither should you)

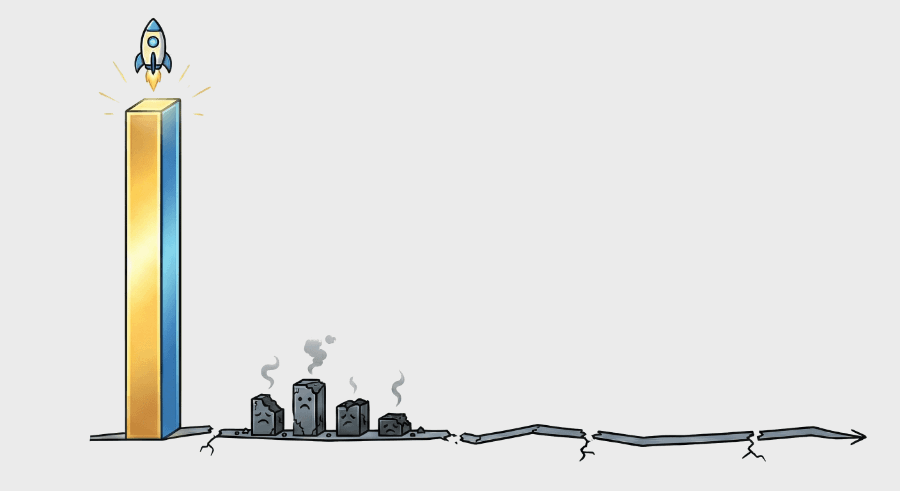

Consider this our declaration of permanent disengagement from “bar chart wars.”

We get it’s good dev marketing, but it’s a waste of time. I’ll explain…

The Convex team has designed and operated database systems that scaled to millions of requests per second. We’ve cared about which AVX instruction sets CPUs supported, which hardware caching layers could buffer writes, how to hide latency in application-specific ways, and all the other performance tricks that eventually matter. I’m confident we can pull off any move our competitors can.

But I wouldn’t be surprised if they can pull off any move we can as well. There are great database engineers working at all these companies.

But big bar chart variations across wildly different systems end up being a waste of time to debate for two main reasons.

#1. They always amount to apples vs. anvils

I’m pretty sure Convex’s CPUs run just as fast as yours. And doubtless your disks sync data just as quickly as ours.

So normally when different systems have wildly different performance characteristics, the benchmark is not actually testing the same thing—even if it purports to be.

Some may not remember the bar chart wars of the early 2010s, so pull up a chair and let me fill you in. In those days, MongoDB smoked everyone by only periodically writing data to disk and never acking writes to the client. Redis posted scorching numbers by only supporting in-memory datasets and taking a long time to replay the transaction log upon crash (and also only periodically writing data to disk, fsync games are a perennial favorite).

These designs have their place. But then people would compare these benchmarks to systems like Postgres and shame the “not-web-scale” old tech. Everyone would waste time diving into the silly benchmarks, then they’d rediscover the wild differences in actual system behavior. Suddenly, we’d remember all parties know how to write code, have access to the same machines, and were either building or testing different things. Or both.

And the whole hullabaloo was a giant waste of time. Convex does not want to waste time, so we’re not gonna do it.

But, we don’t want to spoil your fun! So if you want to play along at home, here’s a guide to getting started:

- Is fsync() being called on every write? Are writes being acked?

- Is it ACID? Is there record contention? If so, is the same consistency being guaranteed (strictly serializable, etc)?

- Are we utilizing multi-AZ semi-sync replication, or are we allowing data loss on machine/AZ failure?

- Is the way request pipelining or mutation buffering works in the client affecting things?

- Are we just measuring network RTT and/or TLS handshake from the client?

- Does the database support larger-than-memory datasets? Does the database recover in O(1) time?

- Is the issue that the benchmark itself is too slow?

- Did you misconfigure your competitor's platform, or are you not understanding the resource allocation scheme on that competitor?

I promise you, once you run through and resolve all these differences, you’ll fully understand why these systems are performing differently. And that understanding will reveal the principles behind why these systems perform differently. (Which is actually a really cool exercise if you want to learn about database systems, design variations, and tradeoffs.)

Speaking of principles, here’s one of ours that makes us even less interested in these bar chart wars…

#2. Synthetic benchmarks lead to bad product management, and so are bad for customers

We already mentioned the Convex team's extensive experience building extremely high-scale systems. So, as an exercise, just how scalable should we have made Convex before it was good enough for customers?

- 10,000 requests per second?

- 100,000 requests per second?

- 1M requests per second?

Each of those thresholds takes an order of magnitude more dev-hours to build and operate than the previous one (unless you’re compromising on reliability/consistency/durability—see section #1). So, how far should we go before we put our new persistent abstraction in people’s hands?

We believe: not very far! The first public version of Convex probably—legitimately, this time—did only 10s of requests per second. We’re not 100% sure b/c it did not matter, so we didn’t measure. We just needed to make sure it was useful at all, to anyone!

But the core of that idea is evergreen: all that really matters today is that you’re scaling ahead of what your current customers and prospective customers need.

So we’ve always taken the approach that we should learn from real customer workloads and scale ahead of them based on real data and feedback.

Here’s how we measure success, scaling or otherwise: Do our customers love Convex? Do we keep our promises as they grow? Anything that defocuses us from that is a waste of time.

And nothing is a bigger waste of time than setting a precedent of engaging with every bar chart on Twitter, going “nah uh!” “uh huh!” back and forth with some other database engineering team until we disentangle signal from noise.

So we ain’t gonna do it. It’s scaling theater, not scaling.

No fun! Do you owe us nothing?

Yes, every stateful development platform (like a database or BaaS) owes its customers (and prospective customers) as much clarity as possible on:

- Can you all operate my traffic this year? And what I project for next year?

- Even if you can, how much will it cost?

- Do you have a track record of improving answers 1 & 2 for me over time, so the decision I make today will only get wiser?

And to that end, Convex has a project that’s been underway for the last few months as part of our upcoming enterprise plan launch.

We’ve designed a few scenarios that are based on actual Convex customers who have been running hundreds of thousands or more DAU on our platform over the last couple of years. We only base these scenarios on real applications to keep ourselves honest and not tilt the scales in our favor. And by using real apps, we’ve learned that this provides a more relatable basis for future customers to draw parallels to their own projects when they consider us.

For every scenario, and for every plan & deployment class we offer, we’ll publish:

- The maximum request rate at which the tail latencies are acceptable enough that the site still feels “healthy.” (Raw RPS/QPS is just dumb.)

- The cost to run this load for a month.

- The history of these numbers over time, demonstrating a trend in our customers’ favor.

We’re doing this because we’ve learned in our conversation with customers that this is the most transparent and useful way to convey what Convex is capable of and what it will cost.

Does that mean Convex will be the absolute fastest in every benchmark vs. every competitor? I mean, almost certainly not. Let’s just say no.

But we have a strong track record now from growing our business. And we know that for many customers and applications, Convex is an excellent fit: economical, reliable, productive, and yes, “fast.”

With our team’s finite time, this is the most valuable way we can provide clarity around cost and scaling for Convex customers—even though we admit throwing bar charts back and forth with competitors makes for good Twitter.

Convex is the backend platform with everything you need to build your full-stack AI project. Cloud functions, a database, file storage, scheduling, workflow, vector search, and realtime updates fit together seamlessly.